The Clever Hans Effect

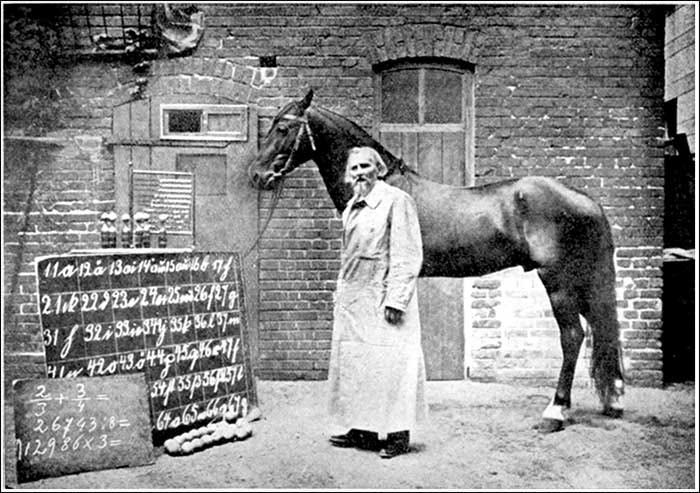

At the turn of the twentieth century, crowds gathered to watch a horse do something extraordinary.

Clever Hans could count and answer arithmetic questions by tapping his hoof.

Ask him what two plus three was, and he would tap five times, then stop. The audience applauded. The handler smiled. Newspapers marveled at the possibility that animal intelligence had finally revealed itself.

This was not a parlor trick performed in a back room. It was a public demonstration, staged with confidence, witnessed by educated observers, and discussed seriously. There was a sense that something important about intelligence had been uncovered, perhaps even that long-held assumptions about human uniqueness were about to be overturned.

It all felt very modern. It all felt modern. And it was, just not in the way the crowd imagined. The explanation for this extraordinary feat, when it finally came, was far more interesting. And the resolution has much to say about how we interact with generative AI today.

Sensitivity, not cognition

Clever Hans was not doing math. He was reading people.

Investigators discovered that Hans was very sensitive to involuntary human signals. A slight change in posture. A tightening of attention. A held breath. Most importantly, the moment of release when the correct number had been reached. Hans simply tapped until the tension stopped.

No one was cheating. The signals were unconscious. Even the handler did not realize he was providing them.

If there was any intelligence in the system, it did not reside in the horse’s brain. Instead, it lived in the interaction between the observers and the observed. Hans was a mirror, reflecting human expectation back to the crowd, amplified by speed and confidence.

The miracle dissolved and Hans was eventually put out to pasture to live out a less than celebrated existence. But the lesson remains and offers us a window into AI-powered social media bots.

A Modern Rhyme

Large language models mirror the framing, tone, assumptions, and intent we, as users, bring to them. They do this extraordinarily well. Give a chatbot a direction in the conversation and they accelerate along it. They adopt our vocabulary. They reinforce our metaphors. They smooth over uncertainty and return fluent, confident continuations that often reward us with praise for our own banter.

We all know, at some level, that fluency creates the impression of understanding. Fast talkers sound authoritative. Smooth prose feels thoughtful. Language has always been our most reliable, and most misleading, signal of intelligence.

Where Clever Hans mirrored human signals, chatbots mirror human intention.

The effect is not purposeful deception. It is trained alignment with our own framing and intent. The system responds quickly, confidently, and coherently to whatever direction it is given. The better the user, the stronger the effect. When experts provide better scaffolding, the mirror shines more brightly, powered by our prose.

The Missing Brake Pedal

The problem that many see in the social media model for AI is not that chatbots are wrong. The problem is that they feed our own egos without any real understanding.

Once a direction is set, momentum takes over. Early framing matters more than we think. Each response reinforces the last. Confidence accumulates. The system does not ask whether the destination makes any sense or provides any meaningful solution. It simply helps you get wherever you are pointing more quickly.

How do we avoid getting seduced by our own chatbot conversations? The solution is not just better prompts or smarter models. It is structural; it is in (re)designing the interaction between the observer and the observed.

Quite simply, we need engineered pauses. Time to reflect. We need tools that set up explicit validation steps. Gating forces us to slow down when the stakes of a decision or outcome rise. Thoughtful reflection is not something these systems automatically provide. It is an affordance we must deliberately design into how we build and use generative AI chatbots.

Closing the Circle

Today’s carnival is digital, and the attractions speak in fluent paragraphs instead of tapping hooves.

Clever Hans was a mirror for human signals. Chatbots are mirrors for human intent.

Both enable fluent interaction that accelerates behavior without understanding. The way to stem that mindless rush to conclusions is to introduce pauses for reflection and explicit validation of responses.

The lesson is old. The technology is new. The responsibility, as ever, is still ours.